BUILDING ACCOUNTABILITY WHERE AI GOVERNANCE FALLS SHORT

who we are

Ethical AI Alliance is carried by a core, interdisciplinary team of 50+ active contributors working across technology, AI governance, human rights, research, design, the arts, and law.

Beyond this core, 1,000+ people have signed up to contribute to the Alliance’s work, forming a wider constituency engaged in shaping and supporting its direction.

We are global by design. Contributors span four continents, with active participation across Europe, North America, Africa, and Asia - including people based in Spain, the UK, the US, Morocco, Nigeria, Thailand, Bhutan, and beyond. While the Alliance is coordinated from Spain, its work is rooted in distributed collaboration and shared governance rather than a single institutional center.

AI systems are being deployed across societies faster than existing governance mechanisms can account for their harms. While AI policy discussions multiply, accountability remains fragmented, reactive, or inaccessible to those most affected.

Ethical AI Alliance is a global, interdisciplinary governance lab building shared visibility and accountability practices to address this gap.

-

AI governance is often designed from the top down, through principles, policies, and regulatory frameworks that remain distant from how harm is actually experienced. Ethical AI Alliance operates as a governance laboratory, bringing together interdisciplinary perspectives to test how accountability can be built in practice.

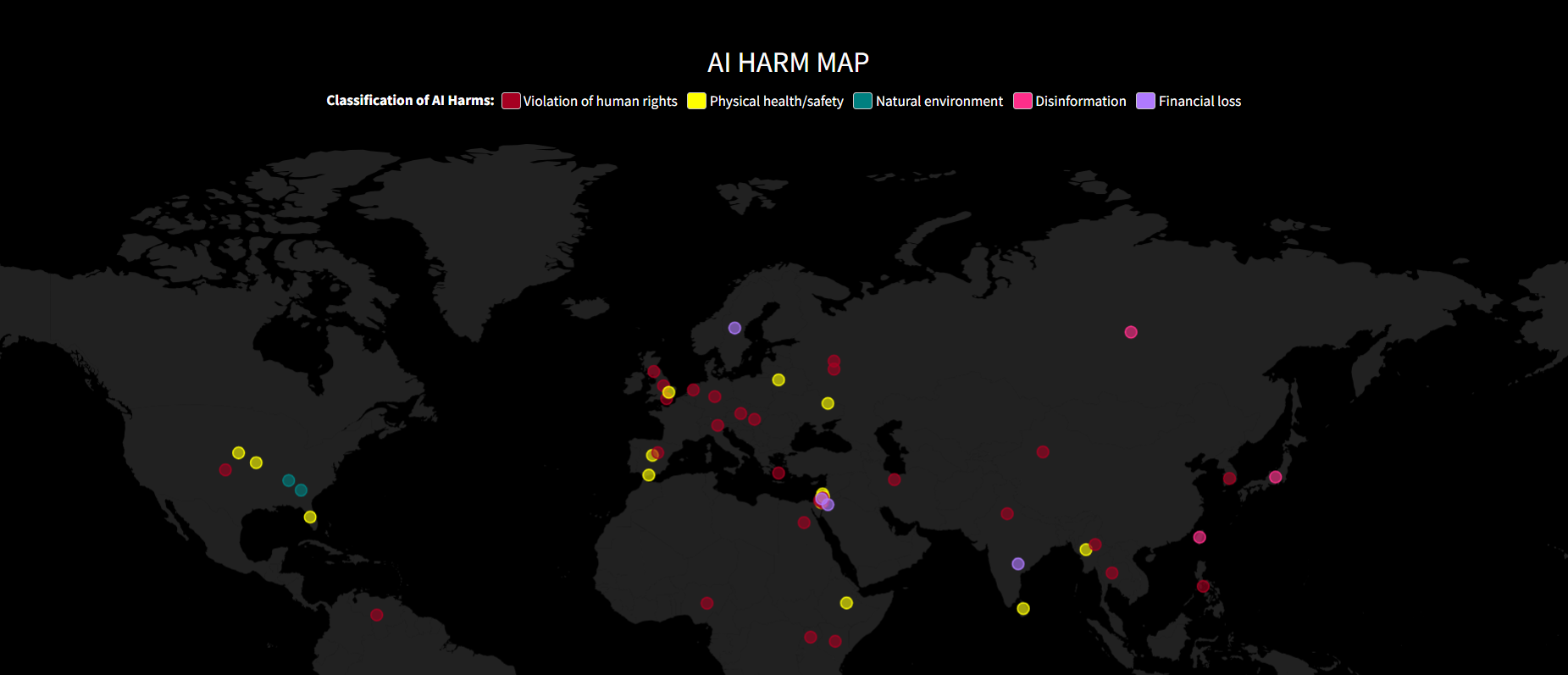

We have learned that harms are rarely isolated incidents. Seen collectively, they reveal patterns that point to systemic design choices and governance failures. Accountability therefore must begin from lived experience and collective judgment, and be shaped upward into governance rather than imposed downward.

-

We work through agile, interdisciplinary sprints that bring together governance, technical, and lived-experience perspectives.

Rather than starting from fixed solutions, we experiment, prototype, and iterate in response to real-world conditions.

Our work is co-created with practitioners and civil society actors on the ground, applying practical methods to governance questions that are often treated only at the level of vision or policy.

What We’ve Built So Far

Over the past year, we have moved from principles to practice.

This includes clear boundary-setting through Clause 0, early governance infrastructure such as the AI Harm Map, and exploratory work on systemic harm reporting and escalation.

Resources

Get involved

This work depends on collective judgment and sustained care.

There are different ways to engage, from contributing expertise to supporting the infrastructure that makes this work possible.